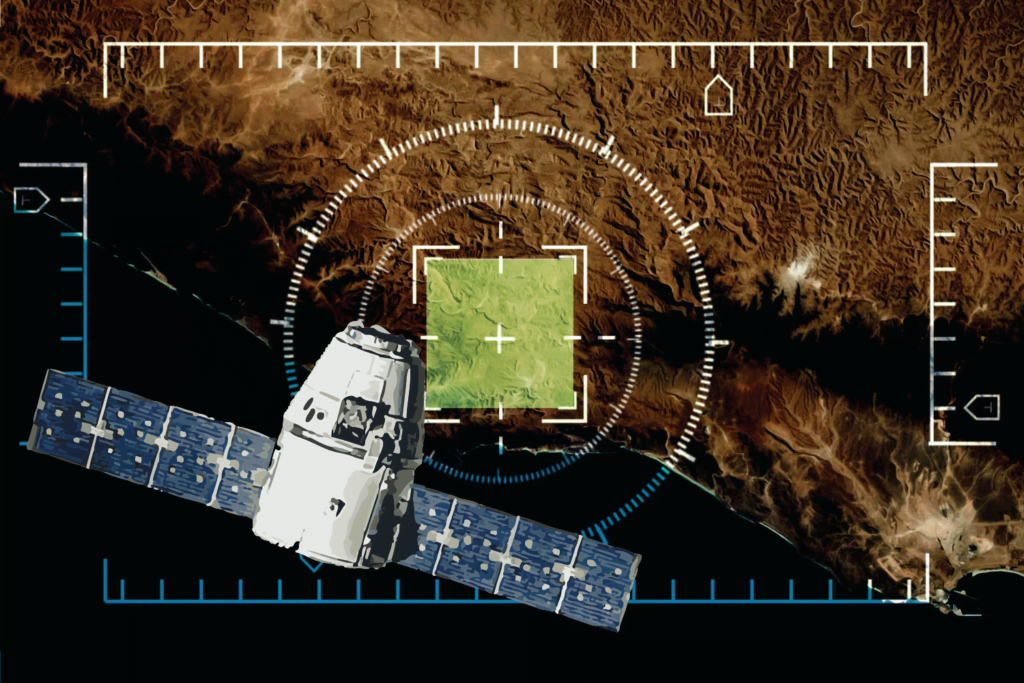

A new algorithm can detect faked satellite images with a high degree of accuracy, but the best safeguard is an informed public.

A people’s-eye view of a street in Odessa, Ukraine: Kevin Dooley. CC-BY-2.0: https://www.flickr.com/photos/12836528@N00/9273967082

A people’s-eye view of a street in Odessa, Ukraine: Kevin Dooley. CC-BY-2.0: https://www.flickr.com/photos/12836528@N00/9273967082

A new algorithm can detect faked satellite images with a high degree of accuracy, but the best safeguard is an informed public.

Maps have always told white lies. To paraphrase the words of Mark Monmonier in his classic book How to Lie with Maps, representing a curving Earth on a flat plane necessarily involves some distortion. Fake satellite images are different: the apparent authority of a satellite photograph can make us forget that they have the same vulnerabilities as any other piece of data.

We know fake satellite images exist. The question is whether we can detect them – and how reliably. A new machine-learning algorithm can spot a particular kind of faked satellite imagery with 94 percent accuracy, but data literacy is the best way to sort reliable images from unreliable ones.

Generative Adversarial Networks (GANs) are frequently used to create convincing deep-fake media. Sometimes the results are less than convincing, as with the recent fake videos of Ukrainian president Volodymyr Zelensky.

Using Cycle-GANs, researchers created fake maps of Tacoma, a city in the US state of Washington. The fake maps included some features of Seattle, Washington, and the Chinese city of Beijing.

GANs consist of a generator network and a discriminator network, which work in tandem through rounds of tuning until they have produced a convincing fake according to the characteristics of the data they are aiming to simulate.

As the GANs working to produce a convincing fake map of Tacoma went through the tuning process, the map grew sharper: shadowed areas gave way to simulated roads, detail increased, and areas intended to show land and water acquired more natural-looking colour.

To the naked eye, the fake map of Tacoma looked authentic.

The machine-learning algorithm sorted through a deep-fake detection dataset consisting of genuine satellite images of Tacoma as well as the faked images. With a success rate of 94 percent, the algorithm picked out the fakes – they were slightly less colourful and had sharper edges than the genuine images.

There is further work to do to develop the algorithm. It must of course be tested on datasets of other cities. It is effective with CycleGAN images but may not be as effective with other GAN models. And it is currently limited to a binary result – totally accurate or totally fake – and it cannot detect when only part of a map has been faked.

Beyond algorithms, though, there is data literacy.

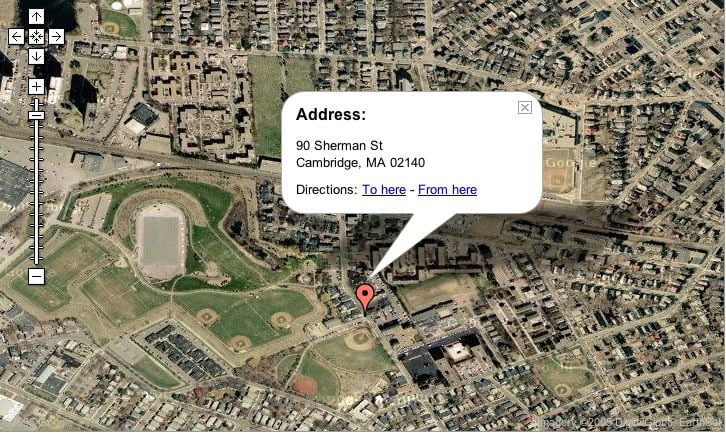

Any satellite image has its limits as a data source. Images are produced from high to low, so unless a particular satellite has geothermal capabilities, such as a synthetic aperture radar (SAR) that can take images through cloud, it cannot show that there are people below an opaque structure. For example, a satellite image of the destroyed bridge in the Ukrainian city of Irpin would not be able to convey that there were people sheltering beneath it. Michael Goodchild wrote about ‘citizen sensors’ in a 2007 article, and this idea has particular relevance to images of conflict zones. Journalists, volunteers and NGO staff can provide detail about what is happening on the ground.

A satellite image is a single data point, but a holistic understanding of a geographical situation needs a variety of data sources. These should come not only from the perspective of a satellite, which in geographic information science is referred to as the “god’s eye”, but also from the perspective of people’s eyes – images from smartphones, cameras or drones. Our perception of the conflict in Ukraine can be informed by short videos on TikTok and photographs on Twitter. With a range of data sources, we can build a more complete picture.

High-resolution satellite imagery with superb data quality is available – but it usually comes at a cost. US satellite-image distributor Maxar provided high-resolution imagery to the Ukrainian government for free, but most high-resolution satellite images for public consumption are very expensive. Maxar’s daily updated image from its WorldView-3 satellite was priced at US$22.50 per square kilometre. To make the best use of their resources, most media platforms purchased high-impact images likely to draw audiences: images of destroyed buildings and bridges, and city blocks reduced to rubble. Few satellite images of humanitarian corridors appeared online.

Satellite imagery is still in high demand in order to better allocate humanitarian aid to people forced to flee. More and more NGOs have become aware of the crucial role of satellite imagery in humanitarian work during conflicts. Free satellite images from https://www.ukraineobserver.earth were a vital resource in supporting the emergency response to the Ukraine conflict.

It is important that consumers of satellite images – both journalists and the public – maintain a critical perspective and look at a range of data sources to assess whether these images are reliable. A fact-checking platform that allows the public to validate satellite imagery would be a great help too.

Methods of distorting satellite images will only grow more sophisticated. Ways to keep pace with the fakery can be found, but there is no substitute for a vigilant, data-literate public.

Originally published under Creative Commons by 360info™.

Dr Bo Zhao is an associate professor of geography at the University of Washington. His research focuses on geographical misinformation and the social implications of geospatial technologies, especially as they relate to the interests of vulnerable populations.

Dr Zhao has declared no conflicts of interest in relation to this article.