Deepfakes are threatening privacy and security. Detection methods using deep learning aim to combat this but there’s a long way to go.

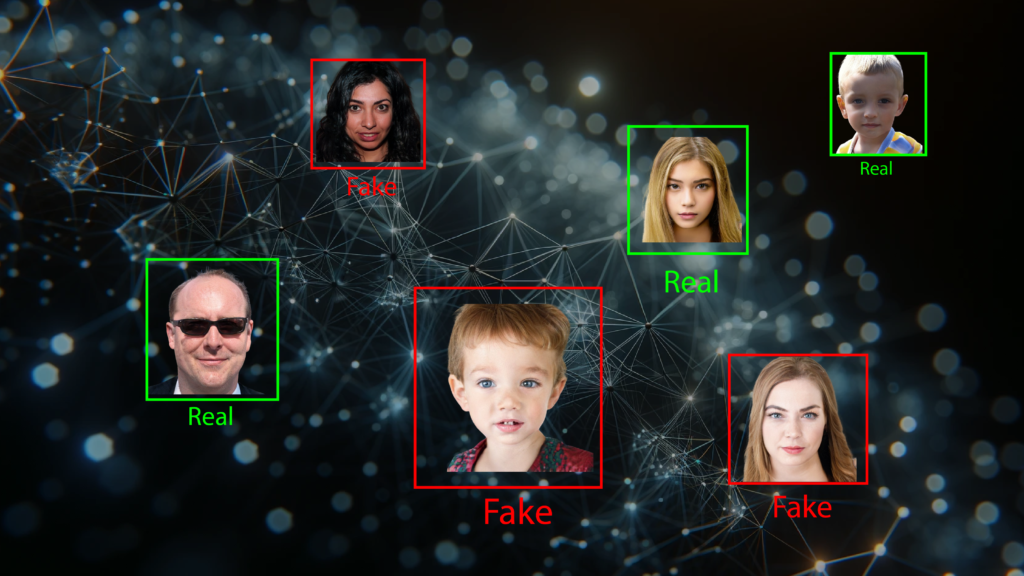

As the digital landscape evolves, the battle against deepfakes has ushered in a new era of detection methods : Photo provided by the author. Single images via Tero Karras, Samuli Laine and Timo Aila (NVIDIA) CC by 2.0

As the digital landscape evolves, the battle against deepfakes has ushered in a new era of detection methods : Photo provided by the author. Single images via Tero Karras, Samuli Laine and Timo Aila (NVIDIA) CC by 2.0

Deepfakes are threatening privacy and security. Detection methods using deep learning aim to combat this but there’s a long way to go.

It started in 2017 when Reddit users uploaded sexually explicit videos using the faces of women celebrities superimposed onto adult film actresses’ bodies.

The emergence of such disturbing videos was a result of a sophisticated deep learning algorithm known as “deepfake”.

It sent shockwaves through the world, threatening privacy and societal security. Within a year, Reddit and other online platforms banned these deepfake porn videos but the problem does not stop there.

This advanced technique enables the replacement of one person’s likeness with another’s. Some popular examples include applying filters to alter facial attributes; swapping faces with celebrities; transferring facial expressions; or generating a new selfie based on an original photo.

The malicious use of deepfakes has the potential to cause severe psychological harm and tarnish reputations, marking it as a powerful tool capable of inciting social panic and threatening world peace.

The development of robust deepfake detectors which are trained to identify the distinct features that distinguish a fake image from a real one, is crucial.

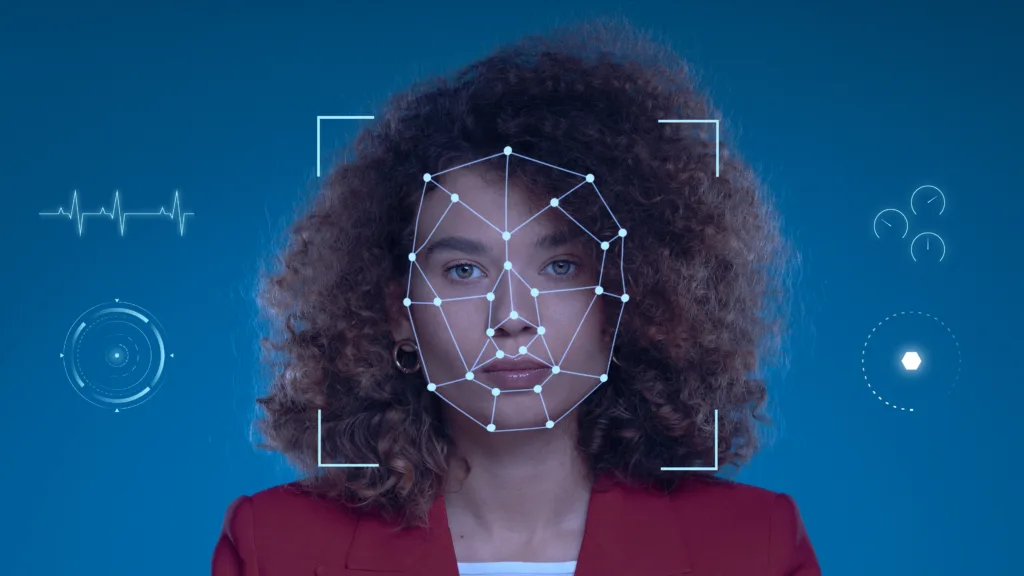

Traditional approaches focus on analysing inconsistencies in pixel distribution, leading to unusual biometric artefacts and facial textures, such as unnatural skin texture, odd shadowing, and abnormal placement of facial attributes, which serve as key indicators for deepfake detection.

However, the evolution of deep learning technology, such as diffusion models, transformers, and Generative Adversarial Networks (GANs), has made conventional approaches vulnerable.

GAN is the leading technique in deepfake generation. It consists of two models: a generator that creates images and a discriminator that attempts to distinguish whether the image is real or fake.

Initially, deepfake images might display noticeable flaws in pixel distribution. Yet, guided by feedback from the discriminator, the generator learns from its successes and failures to refine its technique. Over time, through continuous training, the generator becomes increasingly adept at producing indistinguishable images.

As the digital landscape evolves, the battle against deepfakes has ushered in a new era of detection methods. These are increasingly focusing on deep learning approaches rather than undergoing the tedious process of manually crafting features.

These methods utilise a “black box” approach through convolutional neural networks for feature extraction. This approach allows the model to automatically learn and derive the discriminative features directly from the training data or input features via deep neural networks, streamlining the deepfake detection process.

Within this transformative scenario, researchers are dedicating efforts to develop detection models that specifically target deepfake images from diverse sources to enhance the model’s effectiveness in real-world scenarios while minimising the computational resources needed during training.

Their goal is to transform these models by restructuring the model into user-friendly tools that can be seamlessly integrated with social media platforms and applications.

But the journey doesn’t end there. For developers and researchers, continuous learning and adaptation becomes paramount.

It is crucial to ensure that the detection models remain effective against the evolving techniques used in deepfake generation, which present challenges in detection and responsible use.

Tackling these challenges requires significant technical innovation as well as ethical foresight, coordinating policy, aligning with tools, and engaging in broad public dialogue.

It is this comprehensive strategy that will be necessary to truly advance the technology and to do so responsibly and widely enough to make it effective so that it is intended and widely accepted.

“Seeing is believing”, in an era where deepfakes blur the line between reality and fiction, the pursuit of truth becomes more important than ever.

The development of deepfake detection technology serves as far more than a technical challenge. Instead, it emerges as a beacon in our unrelenting pursuit of authenticity.

Here, we are reminded that even as our eyes may be deceived, our dedication to preserving accuracy ensures that reality will always flicker through.

In the war against digital deception, deepfake detection’s central and essential role remains as our watchful sentry, ensuring that even in the age of deepfakes, seeing can still mean believing.

Dr Scarlett Seow received her PhD in Information Technology from Monash University in 2023. Her research focuses on the design and development of a reliable detection model that is robust against most of the deepfake generation methods and adversarial attacks. She’s currently a sessional tutor at Monash University Malaysia.

Originally published under Creative Commons by 360info™.