Generative AI doesn’t like to credit its sources. For artists, that’s a problem.

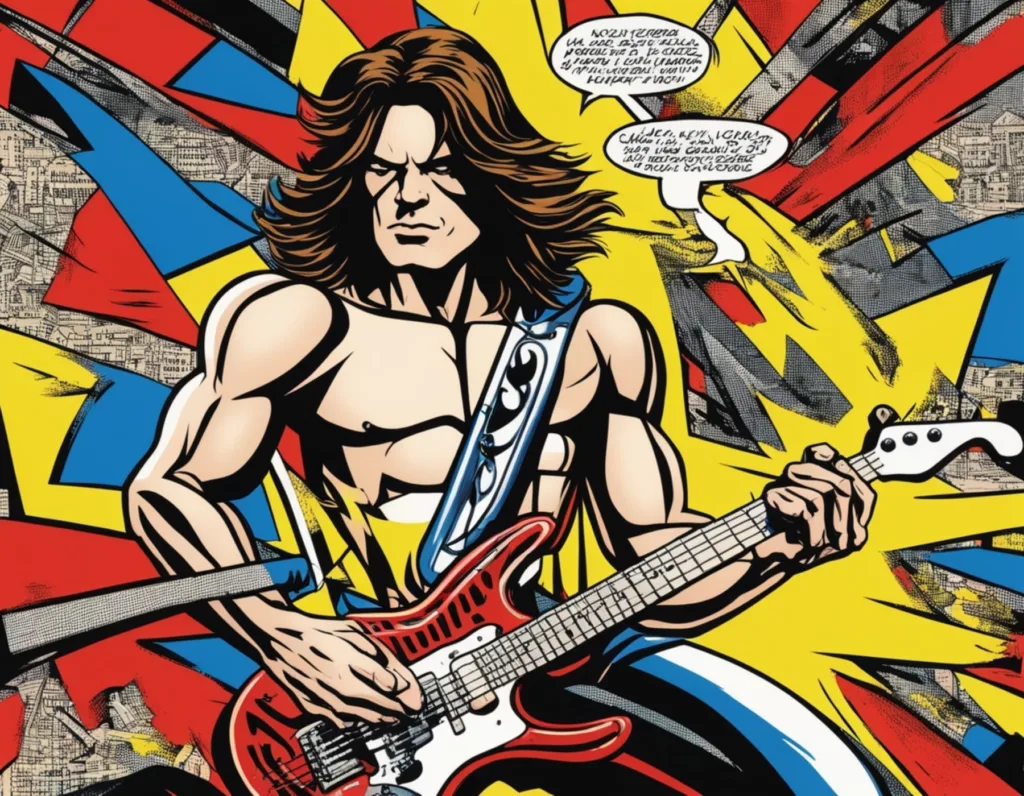

Pop art generator: “Eddie Van Halen”, via deepai.org

Pop art generator: “Eddie Van Halen”, via deepai.org

Generative AI doesn’t like to credit its sources. For artists, that’s a problem.

In a few short years, generative AI techniques have suddenly exploded into a revolutionary new type of creative tool.

Using mere text descriptions, it’s now possible to conjure creative works into existence, as if by magic. People have rightly been quick to compare this revolution to the invention of photography, the phonograph, synthesisers or the internet.

Like earlier creative technologies, AI is disrupting existing creative practices. But more than just competing with traditional creative methods, leading generative AI tools also exploit the creative labour and talent of previous creative work used to train them.

Thus, a fierce debate is underway about how rights relating to the material employed to train generative AI tools should be treated.

One camp, including the emerging AI giants OpenAI and StabilityAI, argues that it is “fair use” to scrape the world’s creative archive to train generative AI models. Are these AI models not, after all, just like people, learning about art and aesthetics from history and the world around them?

Others with a stake in the issue tend to be artists and other copyright holders. Even if they might appreciate this view philosophically (and many do not), they still understand their existing intellectual property rights to include the right to refuse the use of their creative work as AI training data. In their view, companies need their permission.

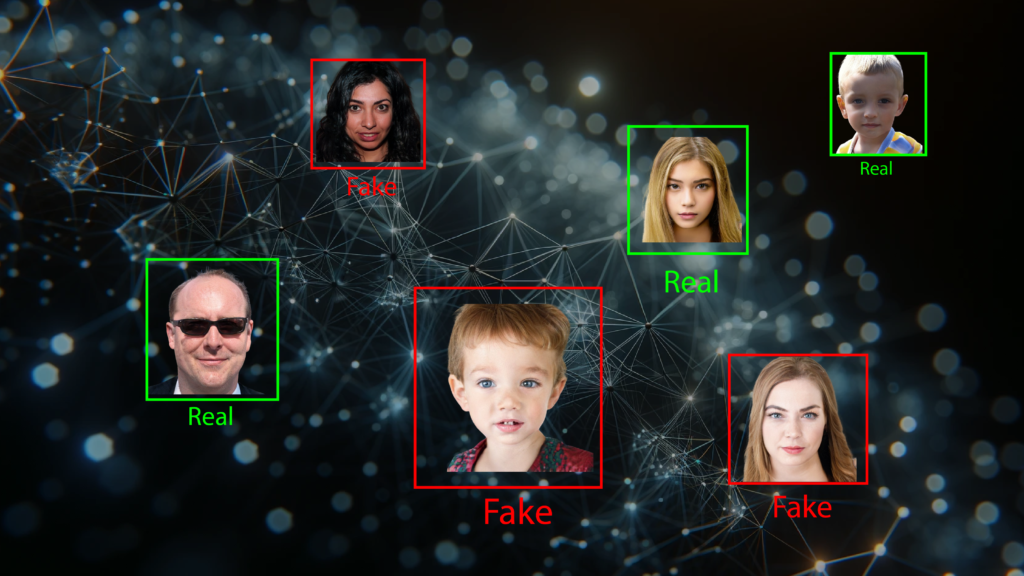

At heart, this division is confounded by the nature of generative AI: generated outputs do not directly reference training data inputs, but digest and regurgitate them. There is no given means of attributing individual sources.

Even for creative industries accustomed to disruption, the situation is a genuine crisis – in part because it unsettles an established socioeconomic consensus, albeit one that is far from perfect.

Creative attribution is itself a social construct. The existing means we have at our disposal to discern and honour the value of individual creative works are the products of collisions and compromise between technology, law and creative practice.

Musical artists know that the economics of a single track break down into distinct intellectual property components, beginning with songwriting. Long before the advent of audio recording, a song, encoded on paper as sheet music, enjoyed copyright protection. Well over a century later, performances and audio recordings are now, in turn, robust copyrightable entities.

For example, if you were to listen on Spotify to Sinead O’Connor’s version of Nothing Compares 2 U, a song written by Prince, then 15 percent of some fraction of a cent goes in the direction of Prince’s estate or representatives for the songwriting part, the rest in the direction of O’Connor’s estate, or her label, performers, and so on, for the rendering of that song as recorded sound.

This 15 percent of a track’s value lying in the ‘song’ is a social construct, the outcome of negotiations between recording companies, publishers, streaming services and artists.

When generative AI creates outputs, there is no clear line of attribution back to specific training sources. The alternative is to pay a fixed fee for the data.

AI machine learning works by using enormous amounts of training data. If an AI company were to pay a fixed fee for the use of that data, then any individual artist’s remuneration would be a miniscule slice of a very big pie.

Would you accept a fraction of $1 for your music composition or oil painting to be used in a product that might then churn out endless imitations of your creative style without credit?

This is why many have now turned their attention to solving the problem of solving AI attribution, analysing datasets or generative networks to discern the relative weights of influence of traditional IP concepts such as songwriting, recording and performing – just as techniques of AI generation lay claim to being able to discern and extract ‘style’ and ‘content’ from creative materials.

So imagine someone used AI to generate a piece of guitar music, and the resulting piece of music sounded a little like Eddie Van Halen (whether intended or not). Putting a figure on that association, say it scored 10 percent in its ‘Van-Halen-ness’, with the remainder going to 10,000 other potential micro-influences (a bit of Beatles, a smidge of John Lee Hooker).

It is entirely conceivable, technically, to do something like this. And with the huge focus currently on generative AI and the means of training that AI, there are a lot of people looking at it. As well as potential pathways for remuneration, AI generation that afforded attribution would offer the basic respect of recognition – about which not just professionals but also amateur artists care deeply.

If handled appropriately, such attribution techniques could provide new insights into our own individual and cultural understanding of creativity as a social phenomenon – but only if those measures and techniques can be properly unpacked and debated.

At worst, artists would be forced to simply accept a dominant attribution engine, much as we accept the automated decisions of recommender engines – the algorithm-driven mystery machines that ‘suggest’ what we want to watch and listen to on any number of digital platforms. There’s a danger that the forward momentum behind generative AI, combined with this expectation of achievable attribution, forces an ill-considered and inequitable outcome.

Such attribution of indirect influences opens a very serious can of worms, where what is perceived as an influence, and measured as such by an opaque process, becomes taken as authoritative. In any such process, the emphasis should be the idea that the musical and artistic styles of the world are more a part of our cultural commons than anyone’s private possessions.

And while artists may simply want to opt out of AI and ignore it, there’s a plausible scenario in which ‘big AI’ becomes a fragmented world where the only cultural data used is what has already been accumulated by large corporations, presenting new issues of access and inclusion.

It is impossible to tell whether automated attribution will become a significant part of our future. But it is essential that where it is used, it should not only be challenged itself through informed open debate, but also have the potential to drive forward concepts of copyright for the public good.

Oliver Bown is Associate Professor at the School of Art & Design, UNSW Sydney. He is author of Beyond the Creative Species: Making Machines that Make Art & Music (MIT Press, 2021), now available as a free ePub from the MIT Press website. His research was funded by an ERC Advanced Grant: “Music and Artificial Intelligence: Building Critical Interdisciplinary Studies”.

Oliver Bown’s research is supported by a European Research Council Advanced Grant and an Australian Research Council Discovery Project grant.

Originally published under Creative Commons by 360info™.

Editors Note: In the story “AI and the arts” sent at: 28/03/2024 09:14.

This is a corrected repeat.