Sexual deepfake abuse silences women causing lasting harm, and laws to protect them are inconsistent.

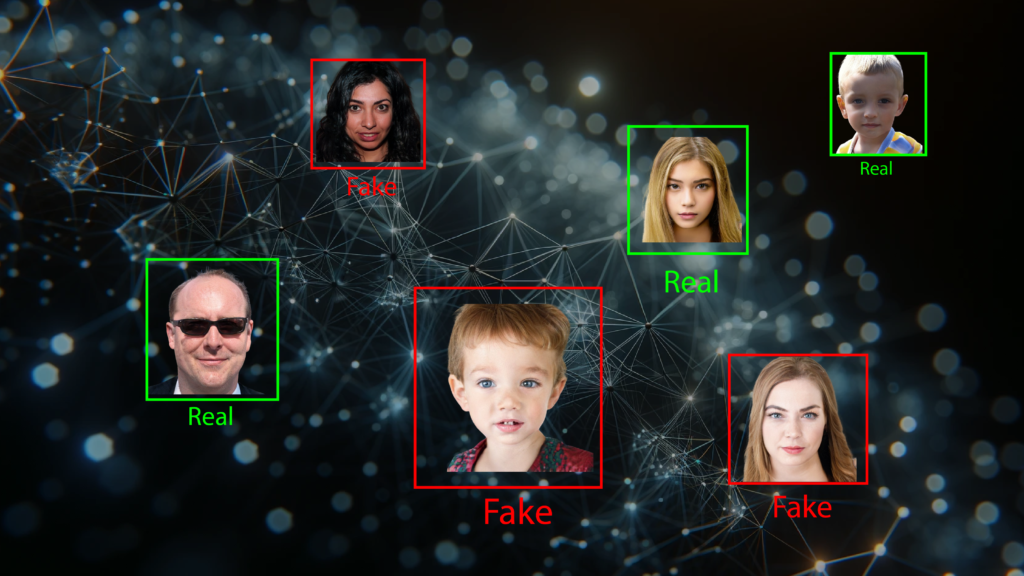

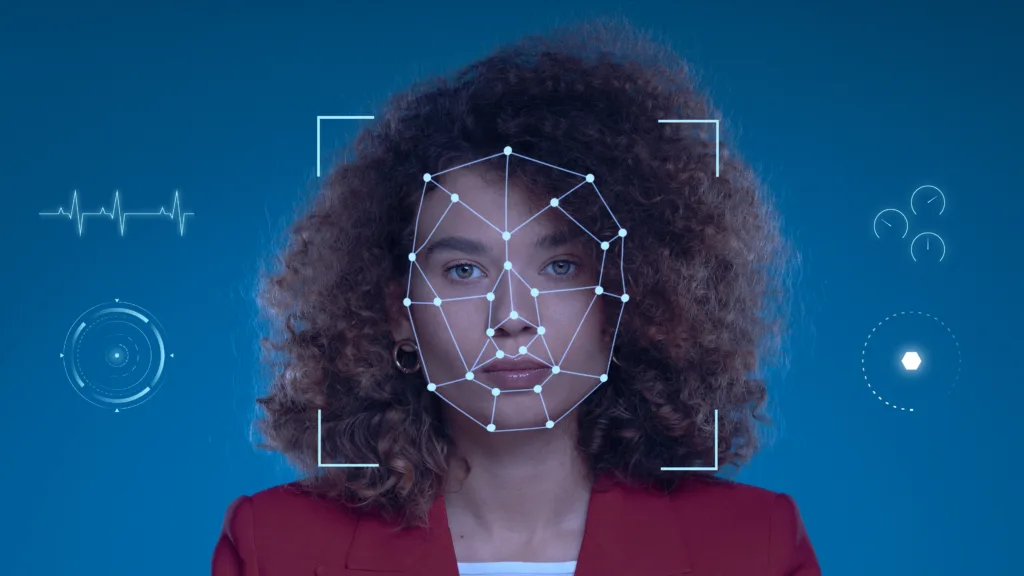

Digitally manipulated images or deepfake images pose significant ethical and legal challenges, prompting a reevaluation of existing laws and responses to such abuses. : Freepik Freepik

Digitally manipulated images or deepfake images pose significant ethical and legal challenges, prompting a reevaluation of existing laws and responses to such abuses. : Freepik Freepik

Sexual deepfake abuse silences women causing lasting harm, and laws to protect them are inconsistent.

In early 2024, pop megastar Taylor Swift became the centre of a disturbing controversy.

Millions of sexually explicit deepfake images of her flooded social media, raising concerns about the misuse of this Artificial Intelligence (AI) technology. Only after one image was viewed more than 47 million times, did social media platform, X (formerly Twitter), remove the content.

Swift’s case provided a wake-up call to how easy it is for people to take advantage of generative AI technology to create fake pornographic content without consent, leaving victims with few legal options and experiencing psychological, social, physical, economic, and existential trauma.

The trend began in 2017, when a Reddit user uploaded realistic, but entirely fabricated, sexual imagery of female celebrities superimposed onto the bodies of pornography actors.

Seven years on, nudify apps are readily accessible and advertised freely on people’s social media feeds, including Instagram and X. In Australia, a Google search of ‘free deepnude apps’ brings up about 712,000 results.

A 2019 survey conducted across the UK, Australia and New Zealand found 14.1 percent of respondents aged between 16 and 84 had experienced someone creating, distributing or threatening to distribute a digitally altered image representing them in a sexualised way. People with disabilities, Indigenous Australians and LGBTQI+ respondents, as well as younger people between 16 and 29, were among the most victimised.

Sensity AI has been monitoring online sexualised deepfake video content since 2018 and has consistently found that around 90 percent of this non-consensual video content featured women.

What happened to Swift is sadly nothing new, as there have been numerous reports of sexualised deepfakes being created and shared involving women celebrities, young women and teenage girls.

Legal ambiguities

These digitally manipulated images pose significant ethical and legal challenges, prompting a reevaluation of existing laws and responses to such abuses.

This is complicated on platforms with encrypted content, such as WhatsApp, where deepfakes may be shared without fear of detection or moderation.

This has been recognised in a variety of forums, including the public campaigning of victim-survivors following deepfakes of Swift, comments from the Australian Federal communications minister and the US House of Representatives’ March 12 hearing on the harms of sexualised deepfake abuse.

Australia has led the way in criminalising image-based abuse and its harms, as well as providing alternative avenues, such as the image-based abuse victim reporting portal facilitated by the eSafety Commissioner, who also has legal powers to compel individuals, platforms and websites to remove sexualised deepfake content.

Except for the state of Tasmania, the distribution or threat to distribute sexualised deepfakes of an adult without their consent is captured under Australia’s existing image-based abuse laws.

However, the non-consensual production or creation of a sexualised deepfake of an adult is not specifically captured under Australian law, except in the state of Victoria.

Elsewhere in Australia, there is much ambiguity as to whether non-consensually creating or producing a sexualised deepfake of another adult is a crime, and whether possession of such non-consensual content is a crime.

It’s the same around the world.

In the UK, the Online Safety Act 2023 criminalises the non-consensual sharing or threat to non-consensually share a sexualised deepfake of an adult, but it does not include the production or creation of sexualised deepfake imagery.

In the US, there is currently no national law criminalising either the creation or distribution of sexualised deepfake imagery of an adult without consent. However, much like Australian states and territories, some states have criminalised the non-consensual distribution of sexualised deepfake imagery of adults, and at least three states — Hawaii, Louisiana and Texas — have amended laws to include the non-consensual creation of sexualised deepfake imagery.

A UK review ranked deepfakes as the most serious social and criminal threat using AI. With claims that open-source technology producing deepfakes ‘impossible to detect as fake‘ will soon be freely accessible for all, there is a need to improve legal and other responses.

A new Australian Research Council study seeks to do just this, exploring sexualised deepfake abuse, including the number of victims and perpetrators, the consequences, predictors and harms across Australia, the UK and the US, with a primary focus on improving responses, interventions and prevention.

The ambiguity around the illegality of creating, producing and possessing non-consensual sexualised deepfake imagery of adults suggests that further legal change is required to provide more appropriate responses to sexualised deepfake abuse.

It may also go some way towards curbing the accessibility of sexualised deepfake technologies. If it is illegal to create or produce non-consensual deepfake imagery, then it would likely reduce the capacity for the technologies, like the nudify apps, to be advertised.

It is important that any new or amended laws are introduced alongside other responses which incorporate regulatory and corporate responsibility, education and prevention campaigns, training for those tasked with investigating and responding to sexualised deepfake abuse, and technical solutions that seek to disrupt and prevent the abuse.

Responsibility should also be placed onto technology developers, digital platforms and websites who host and/or create the tools to develop deepfake content to ensure safety by design and to put people above profit.

Outside of sexualised deepfake abuse, there is a pressing need for guidelines around the responsible creation of deepfake content — whether to avoid the spread of disinformation or to avoid gendered or racial bias, such was the case with the sexually altered image of Victorian MP Georgie Purcell.

Italian Prime Minister Giorgia Meloni is seeking EU€100,000 in damages from two men after deepfake pornographic images using her face were circulated online. According to Meloni’s lawyer, the money was “symbolic” and the demand for compensation was intended to empower women to not be afraid to press charges.

As identified elsewhere, a key problem with AI image manipulation is that these tools rely on the biased social norms and information that our human society has generated.

As the use of AI and digital image manipulation becomes more mainstream, such as the controversial British royal family edited photo, there should be ethical guidelines around how deepfake content is created, shared and discussed.

There are long standing legal precedents in many countries for regulating deceitful, harmful expressions that others perceive as true. Guidelines and regulations pertaining to the ethical and responsible creation of deepfake content could sit within a similar framework.

Given the truly transnational nature of this challenge, it is important that global action, collaboration and responses are facilitated, which focus on preventing harm and ensuring responsible and ethical content development.

A global approach is vital if society truly wants to address and prevent the harms of sexualised deepfake abuse.

Dr Asher Flynn is an Associate Professor of Criminology at Monash University and is Deputy Lead and Chief Investigator with the Australian Research Council Centre of Excellence: the Centre for the Elimination of Violence Against Women (CEVAW).

Dr Anastasia Powell is a Professor of Family & Sexual Violence, in Criminology and Justice Studies at RMIT University. She is a board director of Our Watch and a member of the National Women’s Safety Alliance.

Dr Asia Eaton is a feminist social psychologist and Professor of Psychology at Florida International University (FIU). Since 2016 Asia has also served as Head of Research for Cyber Civil Rights Initiative (CCRI).

Dr Adrian Scott is a Reader in Psychology and Co-Director of the Forensic Psychology Unit at Goldsmiths, University of London. He is also a Chartered Psychologist within the British Psychological Society.

Originally published under Creative Commons by 360info™.

Editors Note: In the story “AI and the arts” sent at: 28/03/2024 06:00.

This is a corrected repeat.